OAIRA as an MCP Server: Your Research Platform Talks Directly to AI

#MCP#AI#integrations#OAIRA#engineering

David Olsson

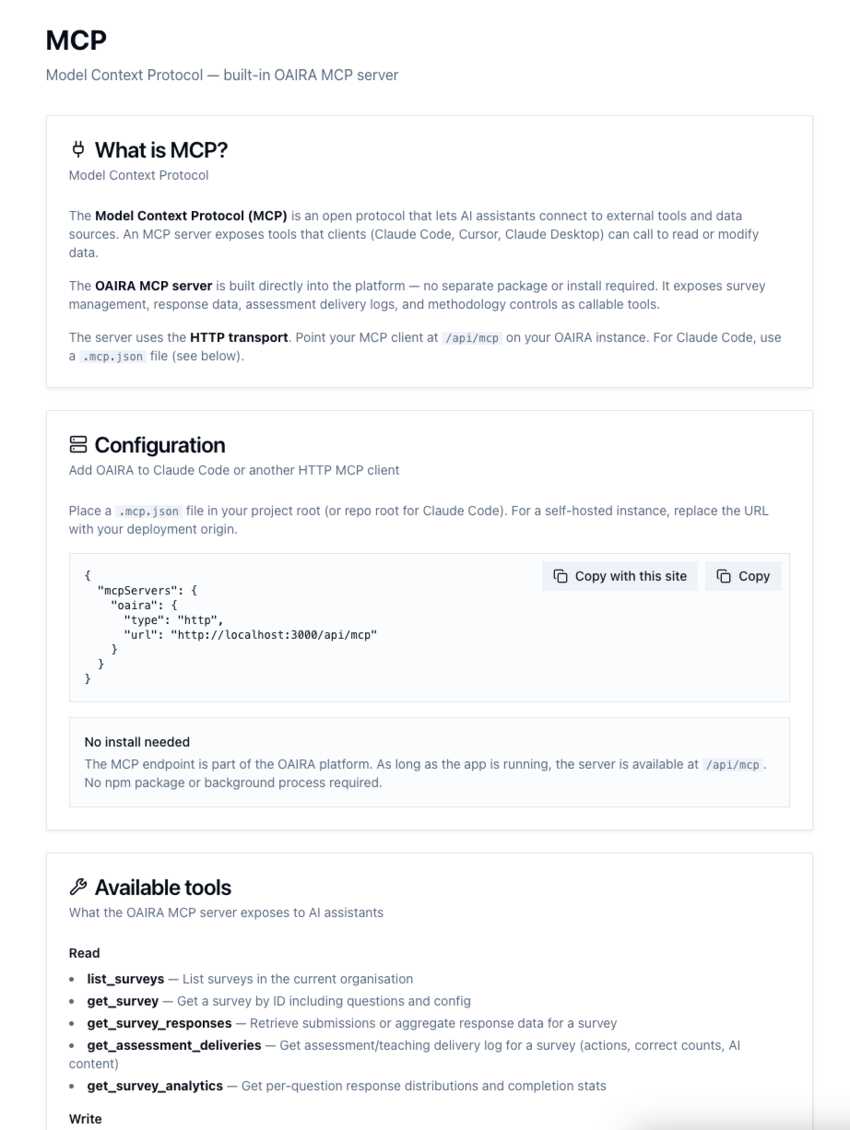

The Model Context Protocol (MCP) is becoming the connective tissue of the AI ecosystem. It's how AI assistants — Claude Code, Cursor, Claude Desktop — reach into external tools and data sources without custom integrations or API wrappers. It's the protocol that lets AI act, not just respond.

OAIRA ships with a built-in MCP server.

Not a plugin. Not a separate package to install. The server lives at /api/mcp on your OAIRA instance and is available the moment the platform is running. Point your MCP client at it and your AI assistant can read and write your research data directly.

Why This Matters

Most research platforms are built for human operators navigating a web interface. You click through dashboards, export CSVs, copy-paste insights into documents. The data lives in the platform; you have to go get it.

An MCP server inverts this. Instead of you going to OAIRA, OAIRA comes to wherever you're working — a Claude conversation, a Cursor session, a custom agent. Your research data becomes a live resource that AI can query, synthesize, and act on in real time.

This is the difference between a research tool and research infrastructure.

What OAIRA Exposes Over MCP

The OAIRA MCP server exposes a full read/write surface across your research operations.

Read tools:

list_surveys— List all surveys in your organizationget_survey— Retrieve a survey by ID, including all questions and configurationget_survey_responses— Pull submissions or aggregate response data for any surveyget_assessment_deliveries— Access assessment and teaching delivery logs — actions, correct counts, AI contentget_survey_analytics— Per-question response distributions and completion statistics

Write tools let you create and deploy new surveys programmatically — survey design, question structure, methodology settings — all callable from an AI assistant without touching the UI.

Zero Install

Configuration is a single JSON file:

{

"mcpServers": {

"oaira": {

"type": "http",

"url": "http://localhost:3000/api/mcp"

}

}

}

Place .mcp.json at your project root. For Claude Code, that's it. No npm package. No background process. No auth ceremony beyond your existing OAIRA session.

For self-hosted or cloud deployments, swap the URL for your instance origin.

What This Unlocks

Once OAIRA is an MCP server, the use cases compound quickly.

Research-in-chat: Ask Claude "What were the top themes from our AI adoption survey?" and get a synthesized answer drawn from actual response data — not a summary you manually wrote.

Agentic workflows: Build agents that monitor survey completion rates, flag anomalies, and draft follow-up research briefs automatically — without any custom integration code.

In-IDE research access: In a Cursor session, reference live survey analytics alongside your product code. Understand how users experience the features you're building, in the same context where you're building them.

Programmatic study deployment: Chain survey creation into broader workflows. An AI agent can identify a research gap, author a new study using the write tools, and deploy it — all from a single prompt.

AI-Native, Not AI-Adjacent

Most platforms bolt AI on. A chat widget here, an "AI summary" button there. The underlying system remains human-operated, and AI is a convenience layer on top.

Building MCP in from the start is a different commitment. It means treating AI assistants as first-class consumers of your platform's data — building the infrastructure so AI can act, not just observe.

OAIRA's MCP server is one expression of that commitment. Your research data isn't trapped in a dashboard. It's a resource that any capable AI can use.

OAIRA is an AI-powered market research platform. The built-in MCP server is available to all users with no additional configuration beyond pointing your MCP client at /api/mcp.