Synthetic Data as Methodology: Simulation Before Fieldwork

#worksona#market-research#simulation#methodology#ai#synthetic-data

David Olsson

The standard research workflow runs in one direction: design an instrument, collect data from real participants, analyze results, draw conclusions. Iteration is expensive — every round of data collection costs time and money. Discovering that a question is ambiguous, a scale is miscalibrated, or a hypothesis is unexaminable with the data collected means running the study again.

We built a workflow that adds a phase before fieldwork: synthetic simulation. Run your instrument against AI-generated respondents first. Discover the problems before they cost you real participants.

What synthetic simulation actually does

It is not a replacement for real data. It is a filter for instrument quality.

When you run a 20-question survey against 50 synthetic respondents differentiated by persona type — early adopter versus skeptical late majority, cost-conscious procurement professional versus ease-of-adoption-oriented end user — you learn specific things about your instrument:

Questions that produce uniform responses across all personas are likely leading, too vague, or asking about something genuinely universal. Questions that produce high variance by persona are asking about genuine contested terrain — which is usually what you want.

Questions that produce unexpected response distributions — where the "analytical" persona responds more like the "intuitive" persona than you'd expect — surface assumption failures in your instrument design before you discover them in real data.

The simulation does not tell you what real respondents will say. It tells you whether your questions are structured well enough to produce interpretable variance when real respondents do answer them.

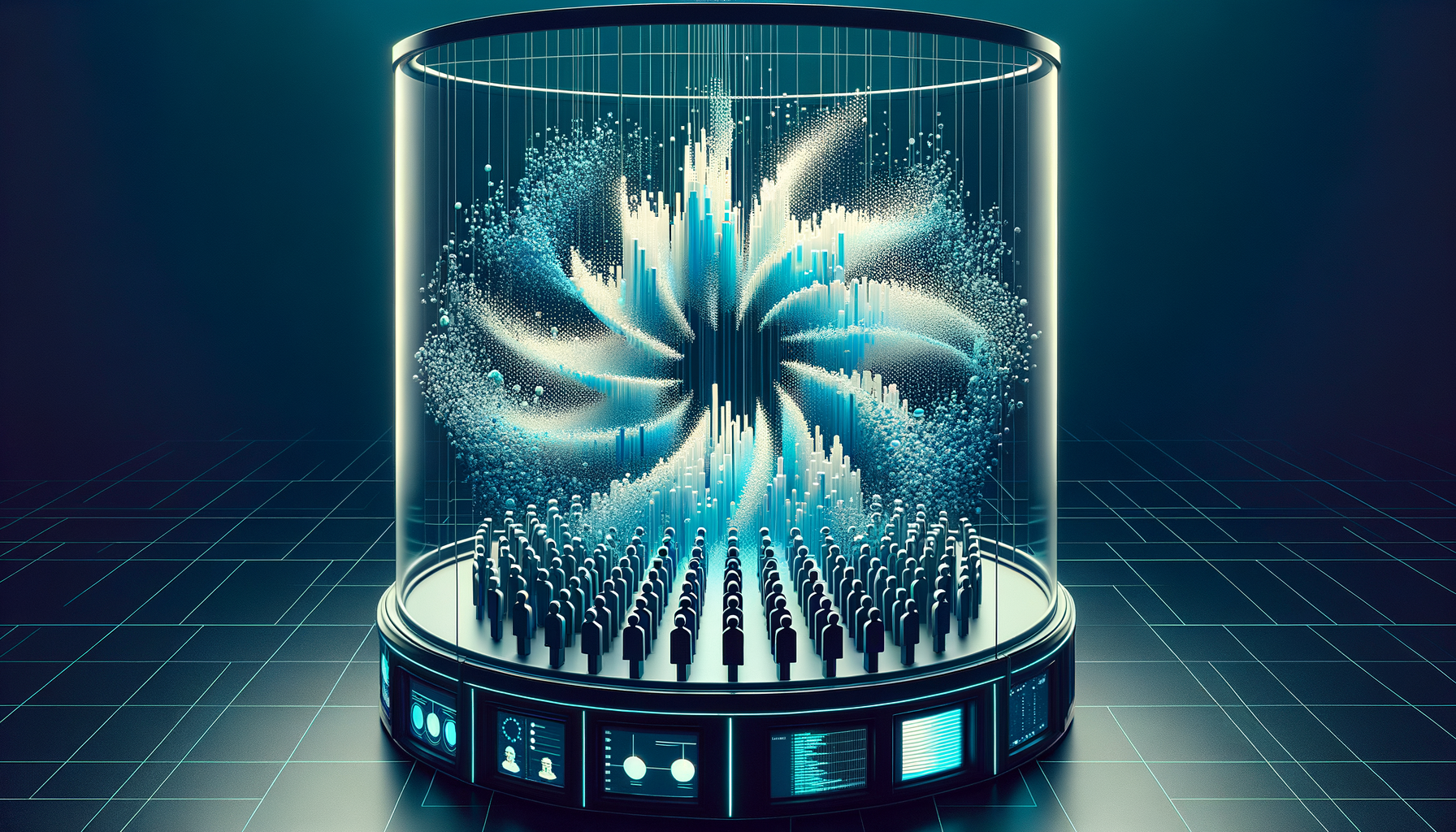

The architecture of synthetic persona diversity

The key to useful synthetic simulation is structured persona diversity. A simulation that generates 50 versions of the same LLM opinion produces no signal. A simulation that generates 50 respondents from 7 distinct archetypal positions — each with a different trait profile, trust disposition, and domain expertise level injected into the system prompt — produces a distribution that maps to the range of real-world variance.

{

"persona": "skeptical_professional",

"traits": ["analytical", "cost-conscious", "low-trust-in-vendors"],

"systemPrompt": "You are a B2B buyer with 15 years in procurement. You have been burned by vendor promises before and require specific evidence before accepting claims about ROI or capability..."

}

The trait profile is the mechanism. It injects not just a role but a disposition — a way of reading questions, a threshold for acceptance, a vocabulary of response. The resulting distribution is not a precise prediction of any real respondent group. It is a structured probe of the instrument's behavior under different reading conditions.

Where simulation connects to fieldwork

The Panterra toolchain makes the connection explicit. The Respondent Pool Designer specifies the real fieldwork population — demographic constraints, quota targets, screening criteria — and locks it as an immutable JSON specification. That same specification can generate the persona library for a synthetic pre-study. The synthetic respondents are drawn from the same demographic profile as the planned real respondents.

This means the pre-study and the fieldwork are aligned by design. Problems discovered in synthetic simulation — a question that systematically confuses the 55+ demographic, a scale that produces ceiling effects in the high-income segment — are problems in the instrument as it will be deployed against the real population.

The cases where it works and where it does not

Synthetic simulation works well for instrument refinement: testing question clarity, scale calibration, response distribution shape, and the presence or absence of meaningful variance by segment. It works for hypothesis generation: "which segments will respond differently to this feature description?"

It does not work for measuring real attitudes. Synthetic respondents reflect the model's training data and the traits we inject, not the actual state of a real market. A synthetic majority in favor of a product feature does not predict real market demand. A synthetic distribution of willingness-to-pay does not validate a pricing model.

The discipline is maintaining clarity about what the simulation is for. It is a quality gate on the instrument, not a substitute for data. Organizations that use it to avoid fieldwork will draw wrong conclusions. Organizations that use it to enter fieldwork with a better instrument will collect better data.

That distinction — simulation as preparation, not substitution — is the methodological principle. The tools exist to enforce it by design: the synthetic phase and the real phase use the same instrument format and the same pool specification, but they produce separate, incompatible outputs. The synthetic data feeds instrument revision. The real data feeds conclusions.