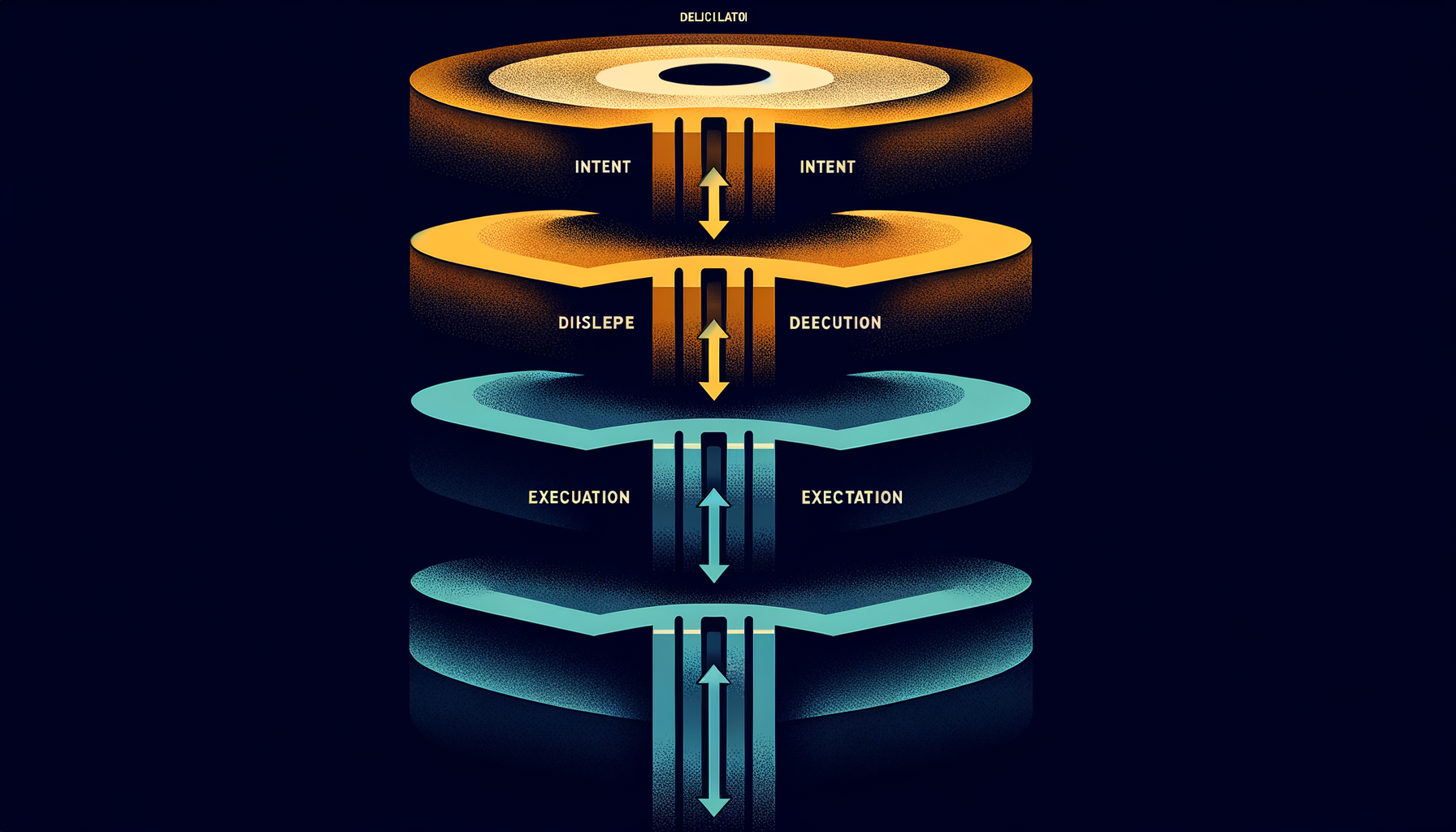

The Delegation Stack: Five Layers from Intent to Execution

#worksona#architecture#delegation#multi-agent#design

David Olsson

The central architectural question in multi-agent systems is not which model to use. It is how to translate human intent into coordinated machine execution across multiple specialized components without the whole thing collapsing under its own complexity.

We built an answer to that question. We call it the delegation stack.

What it is

The delegation stack is a five-layer model that connects a user's expressed intent to the APIs and models that satisfy it. Each layer has a single responsibility. Each layer can be improved independently. Each layer can fail gracefully without corrupting the others.

Layer 5 — User Intent. The user says what they want in natural language. "Help me hire a new engineer." No specification of which agents to call or in what order. Intent only.

Layer 4 — Pattern Selection. The delegator analyzes the task and selects a coordination pattern. Simple queries go direct. Complex decomposable tasks go hierarchical. Domain-specific questions route to specialists. Parallel independent subtasks fan out to peer agents. The pattern is chosen, not hard-coded.

Layer 3 — Agent Orchestration. Selected agents execute their part of the task. An HR agent generates job requirements. A Finance agent models compensation. An Engineering agent defines technical criteria. They may run in parallel or in sequence depending on data dependencies. Each agent receives only the context it needs.

Layer 2 — Skills and Tools. Each agent invokes specialized capabilities: salary_benchmarking, architecture_review, candidate_sourcing. Skills are loosely coupled to agents via the Model Context Protocol. An agent doesn't contain its tools — it calls them.

Layer 1 — LLM Providers. The actual inference calls. GPT-5 for complex reasoning. Claude for long-context synthesis. Gemini for retrieval-augmented tasks. Ollama for local, private processing. Provider selection is a runtime decision, not a deployment configuration.

Why it scales

The stack scales because each layer is independently evolvable.

Add a new LLM provider at Layer 1 and every agent in Layer 3 can use it immediately. Define a new delegation pattern at Layer 4 and all tasks can select it. Add a skill to an agent at Layer 2 and every workflow using that agent gains the capability. No layer needs to know the internals of any other layer.

This is architectural modularity applied to AI orchestration. The comparison to microservices is imprecise but directionally right: each layer has a defined interface, a single responsibility, and can be replaced without breaking the layers above or below.

The pattern selection layer is the core innovation

Most discussions of multi-agent systems focus on the agents themselves — which models to use, how to prompt them, how to handle tool calls. The delegation stack's distinguishing contribution is Layer 4: the explicit, runtime selection of coordination patterns based on task structure.

When a task arrives, we don't ask "which agent should handle this?" We ask "what is the structure of this task, and what coordination pattern does that structure require?"

A task that can be parallelized into independent subtasks gets a fan-out pattern. A task that requires escalating judgment gets a hierarchical pattern. A task with a clear domain expert available gets direct routing. A task with competing perspectives gets a peer review pattern where agents evaluate each other's outputs.

// Pattern selection is explicit — not a lookup table but a reasoned decision

const pattern = await delegator.selectPattern({

task,

complexity: await delegator.scoreComplexity(task),

availableAgents: agentRegistry.list()

});

// Returns: 'hierarchical' | 'fan-out' | 'specialist' | 'peer-review' | 'direct'

The delegator doesn't hardcode these decisions. It reasons about task structure using the same LLMs that will eventually execute the task. The pattern selection step is itself an AI-mediated judgment.

The gap we're closing

The delegation stack is complete as an architecture. Where it is incomplete is observability: we can see what pattern was selected and which agents ran, but we cannot yet see why a pattern was selected, how much each layer cost, or where latency accumulated across a multi-agent workflow.

That observability layer is what gets built next. The stack is the skeleton. Observability is the nervous system that makes the skeleton debuggable and operable at scale.